A $25 billion chip factory. Orbital AI satellites. One terawatt of computer per year. Tesla Terafab made huge promises on March 21, 2026. Here’s what’s real, what analysts think, and what questions haven’t been answered.

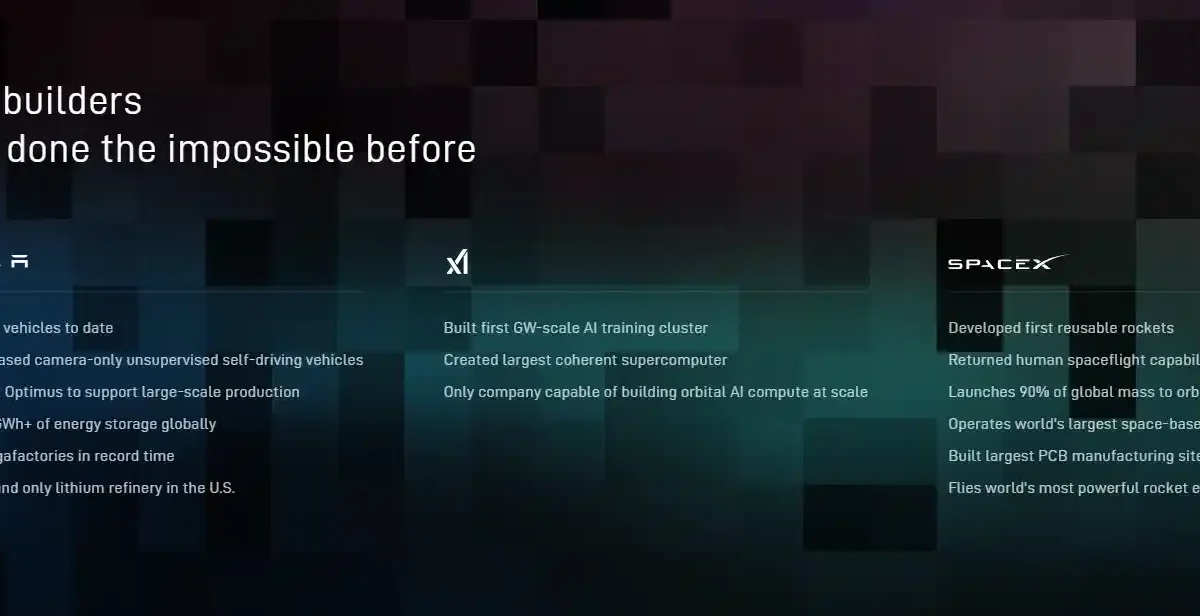

On March 21, 2026, Elon Musk stood inside Austin’s Seaholm Historic Power Plant, a decommissioned 1950s turbine hall and announced Terafab. A joint venture between Tesla, SpaceX, and xAI. A chip factory he called “the most epic chip-building exercise in history by far.” Two weeks later, it’s worth separating what’s actually confirmed from what’s still vision-board material.

Why Tesla, SpaceX, and xAI Need Their Own Chip Fab

The supply problem Musk described is real, even if the solution is enormous. At Tesla’s Q4 2025 earnings call he flagged that TSMC, Samsung, and Micron were expanding but not fast enough for what his three companies needed. xAI’s Grok models demanded more training compute. SpaceX was already planning a satellite constellation so large it filed for FCC permission to orbit one million data center satellites. Optimus robots alone could, per Morgan Stanley analyst Andrew Percoco, eventually require 200 million chips per year fifty times Tesla’s current total chip demand across all products.

The confirmed novel part of Terafab’s pilot design is the on-site iteration loop. The Giga Texas facility is meant to let engineers design a chip, fabricate it, test it, and revise the lithographic mask all without shipping wafers elsewhere. Musk’s exact words on X: “Terafab will technically be two fabs, each making only one chip design.” No current fab anywhere operates this way. If it works, mask iteration that takes months at TSMC could get significantly faster. That’s a real advantage independent of whether any terawatt projection ever materializes.

Tesla AI5 Chip vs D3 Space Chip: Two Very Different Products

Terafab is designed to make two distinct chips that share almost nothing except the process node. The first is the AI5 Tesla’s fifth-generation inference processor. It’s designed for edge workloads: running trained AI models efficiently inside vehicles, Cybercab robotaxis, and Optimus robots rather than training those models. Small-batch production is on Musk’s stated timeline for late 2026, with volume production in 2027. Worth noting: Electrek reported Tesla had already quietly delayed AI5 to mid-2027 before the Terafab announcement. Morgan Stanley puts any meaningful Terafab output at mid-2028 at the earliest under an aggressive scenario.

The second chip, called the D3, is harder to evaluate. It’s described as a high-power processor hardened for the space environment designed to survive cosmic radiation and temperature swings that would destroy consumer-grade silicon. Musk says 80% of Terafab’s output would go toward D3 chips for SpaceX’s orbital satellite network. No independent specifications for the D3 have been published. It does not yet exist as a commercial product anywhere.

Context: Tesla’s Dojo chip team was dissolved in August 2025. Since then, Tesla has relied entirely on external suppliers primarily TSMC and Samsung for its AI silicon. Terafab would be its first attempt at in-house fabrication, not a continuation of an existing program.

Space-Based AI Data Centers: The Physics Case and the Engineering Problem

Musk’s argument that orbit is a better place to run AI compute than Earth is grounded in real physics, even if the engineering is far from solved. Two problems limit ground-based AI data centers: power and heat. Solar irradiance in low Earth orbit runs roughly 35–40% stronger than at Earth’s surface and never switches off. More importantly, heat rejection in vacuum happens through infrared radiators with no water, no cooling towers, and no ambient temperature ceiling capping compute density.

Those are legitimate advantages. The engineering gaps are just as real. Getting enough hardware into orbit to constitute meaningful compute at scale, keeping it operational in a radiation environment for years, and returning processed data to Earth fast enough to be useful none of these are solved problems at anything approaching the scale Musk described. Starship is the only launch vehicle that makes orbital data centers economically discussable, which is precisely why this project exists as a Tesla-SpaceX-xAI joint venture rather than something any single company could attempt.

Terafab Analyst Reaction: The $25B vs $5 Trillion Problem

“Musk has no background in semiconductor production and a history of over-promising on goals and timelines.”

The analyst community has been pointed out. Bernstein’s semiconductor team estimated that producing one terawatt of AI silicon annually would require more than 22 million GPU-equivalent wafer starts per year implying total capital between $4 trillion and $5 trillion, not $25 billion. Morgan Stanley pegged meaningful chip output as unlikely before mid-2028. The $25 billion figure, Tesla’s CFO confirmed on the night, isn’t even inside Tesla’s 2026 capex plan yet. No construction timeline was given. No funding source beyond existing balance sheets was named.

Musk’s Battery Day in September 2020 is a useful comparison. He promised 3 terawatt-hours of 4680 cell production by 2030 and a 50% cost cut through a novel dry electrode process. The 4680 program exists but years behind schedule, far smaller than announced, and requiring multiple process revisions that Musk didn’t anticipate. The pattern isn’t that Musk’s projects fail entirely. It’s that the timeline and scale compress significantly in the telling. Terafab’s pilot facility at Giga Texas is a concrete, testable bet. The terawatt-in-orbit vision is a different category of claim and should be read accordingly.

No comments yet. Be the first to share your thoughts!