Your Claude Code bill doesn’t lie. You’re hitting the claude token limit before the session even gets useful. on files Claude shouldn’t be reading, for context it was already processed three requests ago. That’s not a usage problem. It’s an architecture problem and this guide will explain how to fix it.

The Real Cost of Hitting the Claude Token Limit

Claude Sonnet 4.6: $3 for every million input tokens, $15 for every million output. Claude Opus 4.6: $5 for input and $25 for output. These costs seem reasonable until one realizes that a programming agent will use tokens 10 to 50 times faster than a conversational agent.

The Claude models used to charge a higher premium for prompts over 200,000 tokens, which created a very difficult drop-off point to penalize developers that are doing the best deep-contextual work. For Sonnet 4.6 and Opus 4.6, as of early 2026, there is no longer the surcharge; the full one million token context window now costs at normal per-token rates.

That is indeed great news. This does not mean that context comes for free. Each token continues to cost money, each round-trip takes time, and an overly large context window only results in poorer quality outputs at greater cost than just the API fee.

Check current pricing yourself before you build anything:anthropic.com/pricing

Why Claude Re-reads 27,000+ Files by Default

No persistent memory. That’s the core problem. Every Claude Code session starts cold — no memory, no graph, no awareness of your claude token limit until it’s already burning, no understanding of which modules touch which, no map of what a given change will break. So it reads. Everything it can find.

On a Next.js monorepo, that’s potentially tens of thousands of files scanned to answer a question that requires maybe 12 of them. The tokens stack up not because Claude is uninformed, but because without a knowledge graph reading everything is the only safe strategy. This is fixable at the architectural level.

Implementing a Persistent Knowledge Graph with code-review-graph

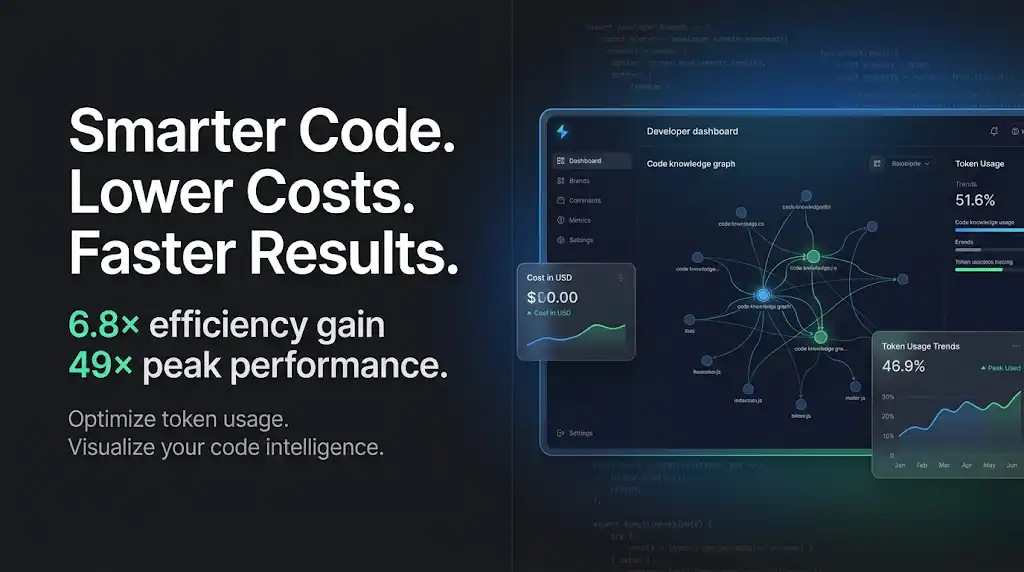

In March 2026, a developer named Tirth Kanani published code-review-graph. It went to GitHub Trending within days. The headline benchmark 49x fewer tokens on a Next.js monorepo attracted equal parts scepticism and curiosity.

The scepticism was reasonable. The number is real, and it needs context.

How Blast-Radius Analysis Achieves 49x Peak Token Reduction

The tool builds a persistent local knowledge graph of your codebase using Tree-sitter AST parsing across 12 languages. Rather than having to read all source code files when Claude must analyze a change, he queries the graph regarding the blast radius of the change: what files does the change affect, what dependencies are in peril, and where is the coverage lacking?

The benchmarks, verified against real open-source repositories:

- Code reviews: 6.8x average token reduction (tested on httpx, FastAPI, Next.js)

- Live coding tasks: 14.1x average reduction, up to 49x peak on large repos

- Naive-vs-graph overall average: 8.2x reduction

The 49x is the peak, not the floor. For small single-file changes in tiny packages, the graph metadata can actually exceed the raw file size the tool says so explicitly in its documentation. The payoff compounds on multi-file changes where irrelevant code gets pruned entirely.

Blast-radius analysis has perfect recall: it never misses an impacted file. It over-predicts in some cases (conservative trade-off), but it never under-predicts. For production codebases, that asymmetry is correct.

Setting Up AST-Level Memory in Under 5 Minutes

pip install code-review-graph

code-review-graph install # auto-detects your platform

code-review-graph build # parses entire codebase

code-review-graph watch # auto-updates on file changesAll of the data is stored in SQLite on your local machine. No need to use any external databases; nothing goes to the cloud. The configuration created by the graph will have paths based on UVX that will work on any machine.

After you install the app, Claude Code will default to using the graph rather than scanning the entire codebase for tokens. You won’t have to change your workflow in any way.

Tactical Context Pruning: The Under-60-Line Rule

Your CLAUDE.md file is a context that loads on every single session. Every line costs tokens before Claude writes a single character of useful output. Keep it under 60 lines. Not as a guideline as a hard constraint.

Audit what’s actually in there. Project setup instructions Claude never uses in mid-session? Cut them. Verbose style guides that belong in a linter config? Move them. Anything that doesn’t change Claude’s behavior on the current task is dead weight paid for in real dollars every single request.

The same principle applies to system prompts and injected context in agentic pipelines. Prompt caching cuts input token costs by up to 90% for repeated context — but only if that context is actually stable and reused. Cache reads are billed at roughly 10% of the standard input rate. Design your context to be cacheable: stable prefixes, consistent structure, no timestamps or session IDs embedded in cached blocks. It’s the fastest way to fix your claude token limit problem without rewriting a single line of code.

Advanced Optimization: Subagents and Trigger Tables

One Claude session reading 40,000 tokens of context to answer a narrow question is expensive. Four specialized subagents each reading 2,000 tokens of scoped context is cheaper, faster, and often more accurate.

The pattern: a coordinator agent receives the task, identifies the domain (architecture question? dependency audit? test gap analysis?), and routes to a specialist with a trimmed, task-specific context. Each specialist uses the knowledge graph to pull only the structural data it needs.

Build a trigger table. Map task types to context profiles. “Debug failing test” → load only affected module graph + test file. “Pre-merge review” → blast-radius analysis + dependency chain. “Onboarding query” → community summaries + flow snapshots. The code-review-graph tool has MCP prompts for exactly these five workflows built in.

This isn’t over-engineering. On a 10-engineer team running Claude Code daily, the cumulative token savings justify the setup time within a week.

Context Overload: How Claude Loses the Plot at 60%

At 60–70% context window utilization, Claude’s output quality measurably degrades. Not occasionally. Reliably.

The model hasn’t hit a hard limit, so it doesn’t fail visibly. It just gets worse, more hallucinations, weaker reasoning, responses that technically answer the question but miss the actual problem. Developers running long agentic sessions hit this constantly without realizing it.

The fix is to treat 60% as a hard ceiling, not a soft warning. Monitor context usage. When you’re approaching the threshold, summarize and restart the session rather than pushing through. A clean 20% context session beats a degraded 75% context session on output quality every time.

The knowledge graph helps here too: by reducing how many tokens each task requires, you stay below the danger threshold longer.

Quick Wins: Commands to Save Your Token Budget Immediately

Run these today, before touching anything architectural:

# Check your current graph stats

code-review-graph status

# Use minimal output mode for routine queries (80–150 tokens vs 500+)

# Already built into the MCP tools via detail_level="minimal"

# Get scoped impact before any review

code-review-graph detect-changes

# Incremental update instead of full rebuild

code-review-graph updateOn the prompt side: output constraints work. Telling Claude to keep responses under a specific token count isn’t just a budget move; a 2026 study found that brevity constraints on large models improved accuracy by 26 percentage points on certain benchmarks. Shorter isn’t just cheaper. It’s often better.

Cache your system prompts. If you’re hitting the API directly, use cache_control at the request level on any context block that stays stable across calls. The 90% cost reduction on cache reads is the single highest-leverage optimization available without changing your architecture.

Summary: Context Mastery is the New Competitive Edge

The teams in 2026 that are able to ship much quicker using Claude Code do not have larger budgets, but rather they treat context as an equal-level engineering concern.

Having a continual knowledge graph removes the need to re-scan for identical files. A disciplined CLAUDE.md keeps the session start time small. Repeatedly prompting the same context results in minimum re-calculation of the previously used context. Subagents will route to each individual context window and keep the window sizes to a minimum. When a context window is utilising less than 60%, it will maintain a high-quality output.

Code Review Graph provides you with the graphing layer without needing to engineer anything new. The benchmark data is legitimate, the configuration process takes mere minutes, and the tokens saved stack up in every session. Claude Token Limit is an engineering issue. Approach it as such.

No comments yet. Be the first to share your thoughts!